Introduction:

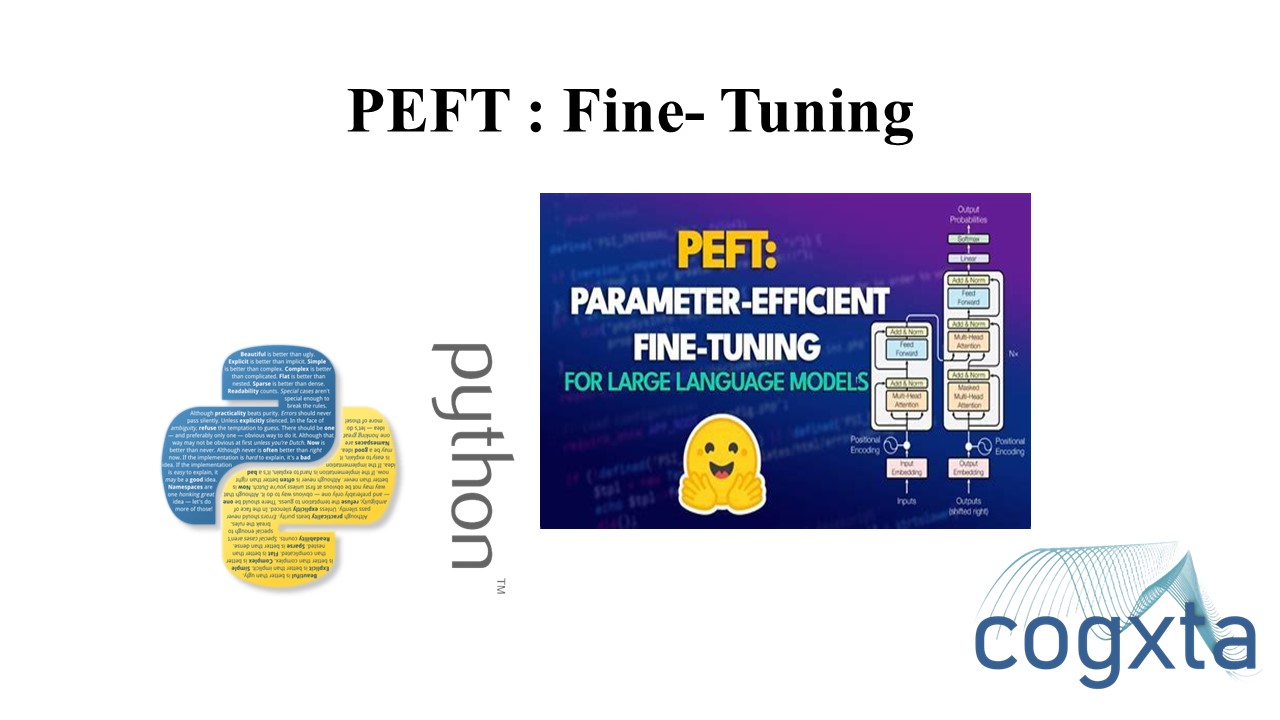

Large Language Models (LLMs) like GPT, T5, and BERT have shown remarkable performance in NLP tasks. However, fine-tuning these models on downstream tasks can be computationally expensive. Parameter-Efficient Fine-Tuning (PEFT) approaches aim to address this challenge by fine-tuning only a small number of parameters while freezing most of the pretrained model. In this blog post, we explore the motivation behind PEFT, its advantages, and how Hugging Face’s PEFT library can help in efficient fine-tuning.

Motivation for PEFT:

As LLMs grow in size, full fine-tuning becomes impractical on consumer hardware.

Storing and deploying fine-tuned models independently for each task is expensive.

PEFT reduces computational and storage costs by fine-tuning only a small number of parameters.

PEFT improves performance in low-data regimes and generalizes better to out-of-domain scenarios.

PEFT Approaches:

LoRA (Low-Rank Adaptation): Adapts large LLMs efficiently by introducing low-rank adaptations.

Prefix Tuning: A method that can be universally applied across scales and tasks.

Prompt Tuning: Utilizes prompts to fine-tune models efficiently.

P-Tuning: A method that shows GPT understands prompts and can be fine-tuned universally.

Use Cases:

PEFT LoRA allows tuning large models like bigscience/T0_3B on consumer hardware with limited RAM, such as Nvidia GeForce RTX 2080 Ti, RTX 3080, etc.

INT8 tuning of the OPT-6.7b model in Google Colab using PEFT LoRA and bitsandbytes.

Stable Diffusion Dreambooth training using PEFT on consumer hardware with 11GB of RAM.

Training Your Model using PEFT:

To fine-tune a model using PEFT, you can use the following code snippet:

from transformers import AutoModelForSeq2SeqLM

from peft import get_peft_model, LoraConfig, TaskType

model_name_or_path = "bigscience/mt0-large"

tokenizer_name_or_path = "bigscience/mt0-large"

peft_config = LoraConfig(

task_type=TaskType.SEQ_2_SEQ_LM, inference_mode=False, r=8, lora_alpha=32, lora_dropout=0.1

)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name_or_path)

model = get_peft_model(model, peft_config)

model.print_trainable_parameters()Conclusion:

PEFT is a powerful approach to fine-tuning large language models efficiently, reducing computational and storage costs while maintaining performance. With Hugging Face’s PEFT library, researchers and practitioners can leverage state-of-the-art models like Transformers and Accelerate for their specific use cases. The library’s integration with popular models and ease of use make it a valuable tool for the AI community.

Leave a Reply