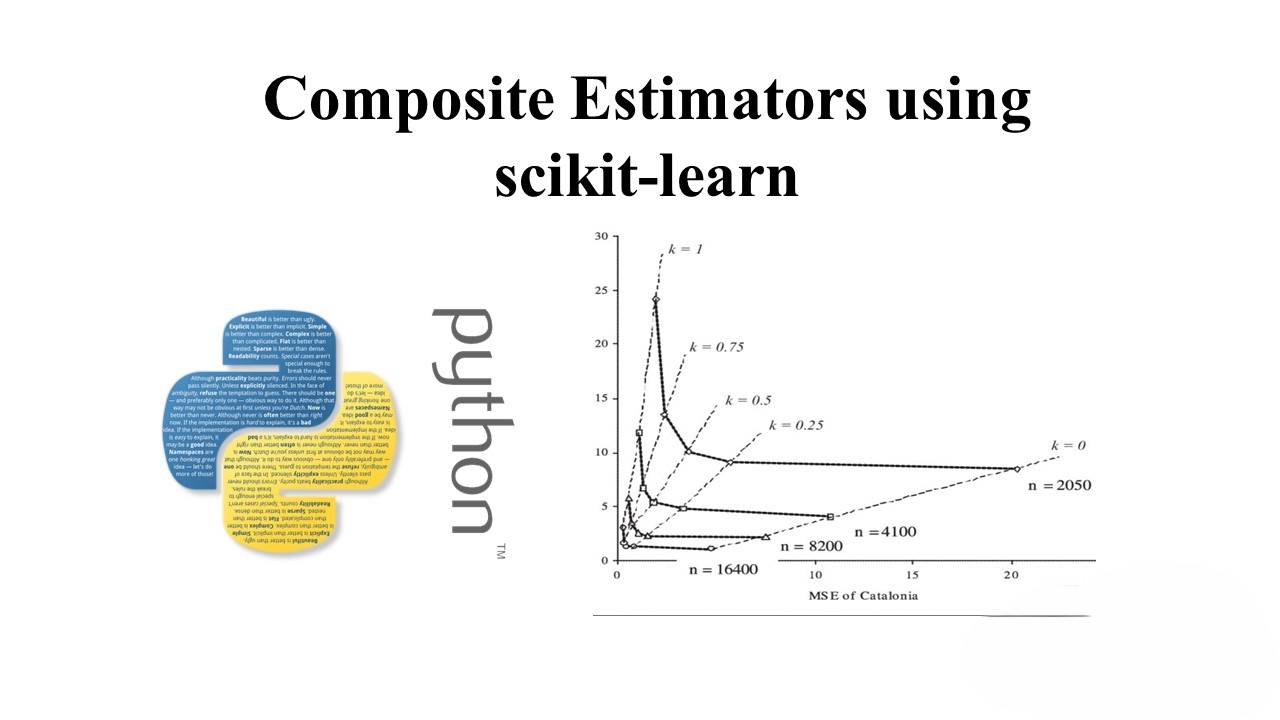

Composite Estimators using scikit-learn: A Comprehensive Guide

Agenda

- Introduction to Composite Estimators

- Pipelines

- TransformedTargetRegressor

- FeatureUnions

- ColumnTransformer

- GridSearch on Pipeline

1. Introduction to Composite Estimators

Composite Estimators in scikit-learn involve connecting one or more transformers with estimators to create a comprehensive model. These composite transformers are implemented using the Pipeline class, while FeatureUnion is used to concatenate the output of transformers to create derived features. Pipelines enhance code reusability and modularity in machine learning workflows.

2. Pipelines

Before feeding data into a learning algorithm, preprocessing steps are often required. Different preprocessing tasks, such as handling missing values and feature scaling, need to be performed in a specific order. Pipelines automate this entire process by chaining together different transformers and an estimator. Intermediate steps must implement both the fit and transform methods. Once trained, the same pipeline can be used for making predictions.

Let’s explore an example of predicting horror authors from text using various classifiers like Logistic Regression, Decision Tree, Naive Bayes, and Linear SVM.

# Import necessary libraries

import pandas as pd

from sklearn.pipeline import make_pipeline

from sklearn.linear_model import LogisticRegression

from sklearn.tree import DecisionTreeClassifier

from sklearn.naive_bayes import MultinomialNB

from sklearn.svm import LinearSVC

from sklearn.feature_extraction.text import CountVectorizer, TfidfTransformer

from sklearn.model_selection import train_test_split

# Load horror data

horror_train_data = pd.read_csv('data/horror-train.csv')

horror_train_data = horror_train_data[['text', 'author']]

# Create pipelines for different models

pipelines = []

for model in [LogisticRegression(), DecisionTreeClassifier(), MultinomialNB(), LinearSVC()]:

pipeline = make_pipeline(

CountVectorizer(stop_words='english'),

TfidfTransformer(),

model)

pipelines.append(pipeline)

# Train and evaluate each pipeline

for pipeline in pipelines:

pipeline.fit(trainX, trainY)

print(f"Accuracy: {pipeline.score(testX, testY)}")

# Make predictions

results = [pipeline.predict(horror_test_data.text) for pipeline in pipelines]

3. TransformedTargetRegressor

In regression tasks, it’s crucial for dependent and independent variables to be linearly related. The TransformedTargetRegressor automates the process of transforming the dependent variable for better error handling. This involves converting the data from a non-normally distributed form to a normal distribution. The entire process, including prediction remapping, is streamlined using TransformedTargetRegressor.

from sklearn.datasets import load_boston

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_absolute_error, r2_score

# Load the Boston Housing dataset

boston = load_boston()

# Split the data into features (X) and target variable (y)

X = boston.data

y = boston.target

# Initialize the Linear Regression model

regressor = LinearRegression()

# Split the data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=0)

# Train the Linear Regression model

regressor.fit(X_train, y_train)

# Evaluate the model

r2 = regressor.score(X_test, y_test)

print('R2 score: {0:.2f}'.format(r2))

# Make predictions

predictions = regressor.predict(X_test)

# Calculate mean absolute error

mae = mean_absolute_error(y_true=y_test, y_pred=predictions)

print('Mean Absolute Error: {0:.2f}'.format(mae))

4. FeatureUnions

FeatureUnion is a powerful tool for combining several transformer objects into one. The transformers execute in parallel during fitting, allowing for efficient and modular feature engineering. We’ll demonstrate its use in predicting employee exits, where different features like department, salary, and numerical attributes are processed separately and then combined using FeatureUnion.

# Import necessary libraries

import pandas as pd

from sklearn.pipeline import Pipeline, FeatureUnion

from sklearn.base import BaseEstimator, TransformerMixin

from sklearn.preprocessing import OneHotEncoder, LabelBinarizer, MinMaxScaler

# Load employee data

emp_data = pd.read_csv('https://raw.githubusercontent.com/zekelabs/data-science-complete-tutorial/master/Data/HR_comma_sep.csv.txt')

emp_data.rename(columns={'sales':'dept'}, inplace=True)

# Define custom transformers

class ItemSelector(BaseEstimator, TransformerMixin):

def __init__(self, key):

self.key = key

def fit(self, X, Y=None):

return self

def transform(self, X, Y=None):

return X[self.key]

class MyLabelBinarizer(TransformerMixin):

def __init__(self, *args, **kwargs):

self.encoder = LabelBinarizer(*args, **kwargs)

def fit(self, x, y=0):

self.encoder.fit(x)

return self

def transform(self, x, y=0):

return self.encoder.transform(x)

class MultiItemSelector(BaseEstimator, TransformerMixin):

def __init__(self, keys):

self.keys = keys

def fit(self, X, Y=None):

return self

def transform(self, X, Y=None):

return X[self.keys]

class SalaryMapper(BaseEstimator, TransformerMixin):

def fit(self, X, Y=None):

return self

def transform(self, X, Y=None):

db = {'low': 1, 'medium': 2, 'high': 3}

r = X.str.strip().replace(db)

return r.values.reshape(-1, 1)

# Define pipelines for different types of features

pipeline_dept = Pipeline([

('selector', ItemSelector('dept')),

('lb', MyLabelBinarizer()),

])

pipeline_salary = Pipeline([

('selector', ItemSelector('salary')),

('sm', SalaryMapper())

])

pipeline_numbers = Pipeline([

('selector', MultiItemSelector(num_cols)),

('scaling', MinMaxScaler())

])

pipeline_bin = Pipeline([

('selector', MultiItemSelector(bin_cols))

])

# Combine pipelines using FeatureUnion

fu = FeatureUnion([

('dept_pipe', pipeline_dept),

('salary_pipe', pipeline_salary),

('numbers_pipe', pipeline_numbers),

('bin_pipe', pipeline_bin)

])

# Final pipeline

final_pipeline = Pipeline([

('union', fu),

('classifier', RandomForestClassifier(n_estimators=10))

])

# Train and evaluate the final pipeline

final_pipeline.fit(trainX, trainY)

print(f"Accuracy: {final_pipeline.score(testX, testY)}")

5. ColumnTransformer

Datasets often consist of heterogeneous column types, and mapping them to appropriate pipelines can be challenging. ColumnTransformer is introduced to make this process easier by associating specific columns with designated transformers. We’ll apply ColumnTransformer in a real-world example using the Titanic dataset, where numerical and categorical features are processed separately.

# Import necessary libraries

from sklearn.compose import ColumnTransformer

from sklearn.impute import SimpleImputer

from sklearn.preprocessing import StandardScaler, OneHotEncoder

from sklearn.pipeline import Pipeline

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

# Load Titanic data

titanic_data = pd.read_csv('https://raw.githubusercontent.com/zekelabs/data-science-complete-tutorial/master/Data/titanic-train.csv.txt', index_col='PassengerId')

# Define numerical and categorical columns

num_cols = ['Age', 'Fare']

cat_cols = ['Embarked', 'Sex', 'Pclass']

# Define pipelines for numerical and categorical features

pipeline_num = Pipeline(steps=[

('imputer', SimpleImputer(strategy='median')),

('scaling', StandardScaler())

])

pipeline_cat = Pipeline(steps=[

('imputer', SimpleImputer(strategy='constant', fill_value='missing')),

('encoding', OneHotEncoder(handle_unknown='ignore'))

])

# Combine numerical and categorical pipelines using ColumnTransformer

preprocessor = ColumnTransformer(

transformers=[

('num', pipeline_num, num_cols),

('cat', pipeline_cat, cat_cols)

])

# Final pipeline with preprocessor and classifier

final_pipeline = Pipeline(steps=[('preprocessor', preprocessor),

('classifier', RandomForestClassifier(random_state=42))])

# Split the data

X_train, X_test, y_train, y_test = train_test_split(titanic_data.drop('Survived', axis=1),

titanic_data['Survived'], test_size=0.2, random_state=42)

# Train and evaluate the final pipeline

final_pipeline.fit(X_train, y_train)

print(f"Accuracy: {final_pipeline.score(X_test, y_test)}")

6. GridSearch for Pipelines

Finally, tuning the hyperparameters of both transformers and estimators within a pipeline becomes essential. GridSearchCV helps in finding the optimal combination of hyperparameters for improved model performance. We’ll demonstrate this by performing a Grid Search on our previously defined pipeline for the Titanic dataset.

# Import necessary libraries

from sklearn.model_selection import GridSearchCV

# Define the parameter grid for GridSearch

param_grid = {

'preprocessor__num__imputer__strategy': ['mean', 'median'],

'classifier__n_estimators': [50, 100, 200],

'classifier__max_depth': [None, 10, 20, 30],

'classifier__min_samples_split': [2, 5, 10],

'classifier__min_samples_leaf': [1, 2, 4]

}

# Create GridSearchCV object

grid_search = GridSearchCV(final_pipeline, param_grid, cv=5, scoring='accuracy')

# Perform GridSearch on the data

grid_search.fit(X_train, y_train)

# Get the best parameters and evaluate the model

best_params = grid_search.best_params_

best_model = grid_search.best_estimator_

test_accuracy = best_model.score(X_test, y_test)

print(f"Best Parameters: {best_params}")

print(f"Test Accuracy with Best Model: {test_accuracy}")

By the end of this comprehensive guide, you should have a solid understanding of using composite estimators, pipelines, and related tools in scikit-learn for efficient and modular machine learning workflows.

Stay tuned for more updates on scikit-learn and machine learning best practices!

Feel free to adjust and modify the content according to your style and preferences.